Two recent AI security events have made one thing clear. We do not yet have a robust or widely accepted shared responsibility model for AI systems, agents, MCP protocols, and connected applications.

This is not a new problem, but it is unfolding in a far more complex environment than anything we have faced before.

Lessons from the Cloud, But Not a Simple Replay

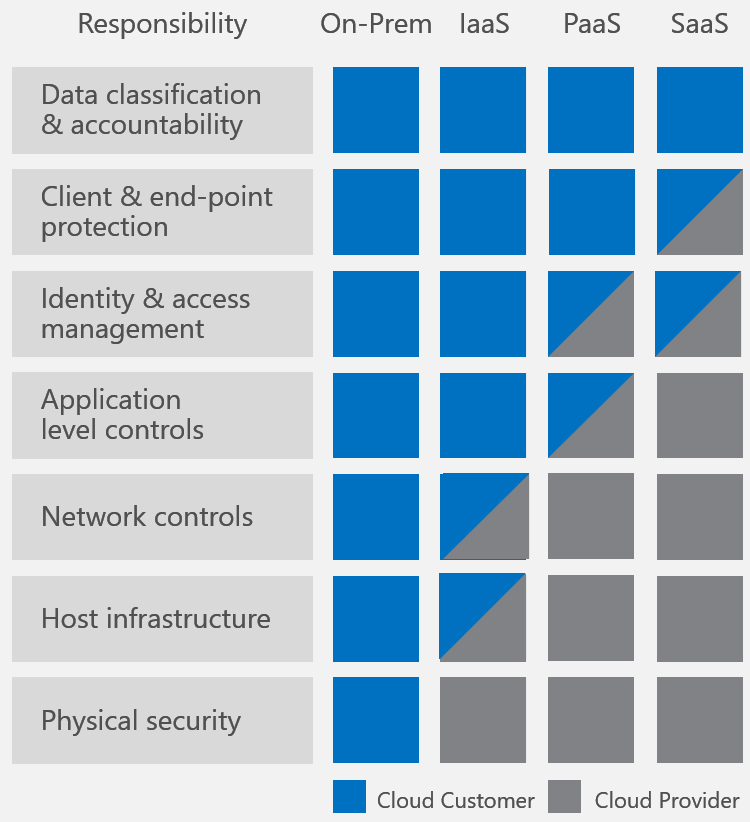

In the early days of cloud adoption, there was significant ambiguity about who was responsible for what. As organizations moved from on premises infrastructure to IaaS, PaaS, and SaaS models, both providers and customers were motivated to accelerate adoption while also limiting liability.

The shared responsibility model eventually brought structure to this confusion. Even then, it was not simple or immediately accepted. The standard cloud model from Microsoft included 28 control areas, with only six categorized as shared responsibility. Despite this limited overlap, it took years for providers, customers, and regulators to align.

By 2021, even that model began to evolve. Google Cloud introduced the concept of shared fate, signaling that providers should not only define responsibility boundaries, but also actively help customers secure their environments through better defaults and tooling.

That evolution took more than a decade. AI is moving faster and introducing far more complexity at the same time.

AI Expands the Scope of Responsibility

Early attempts to define an AI shared responsibility model already show how much more complicated this domain is. Compared to cloud’s contained structure, AI introduces a much larger and more interconnected set of risks.

Some emerging models, such as the one from Return on Security approach 100 control areas, with 30 categorized as shared responsibility and a significant portion assigned to providers. This expansion reflects not just scale, but also ambiguity.

AI systems introduce new categories of risk that do not map cleanly to traditional boundaries. These include prompt injection, model drift and hallucinations, agent autonomy, tool integrations, data leakage, and supply chain dependencies across models and APIs.

Each of these areas raises difficult questions about ownership. Prompt injection can involve model design, application logic, and input validation. Agent behavior depends on model training, framework design, and enterprise configuration. These overlapping layers make it difficult to assign responsibility in a clear and consistent way.

If it took years to stabilize a relatively simple cloud model, agreement in AI will take significantly longer. In the meantime, the lack of clarity creates real exposure.

When Shared Responsibility Becomes Deflection

Recent incidents highlight how unsettled this landscape has already become. In one case reported by Dark Reading, Salesforce disputed vulnerability findings by classifying them as configuration specific issues and emphasizing human in the loop oversight.

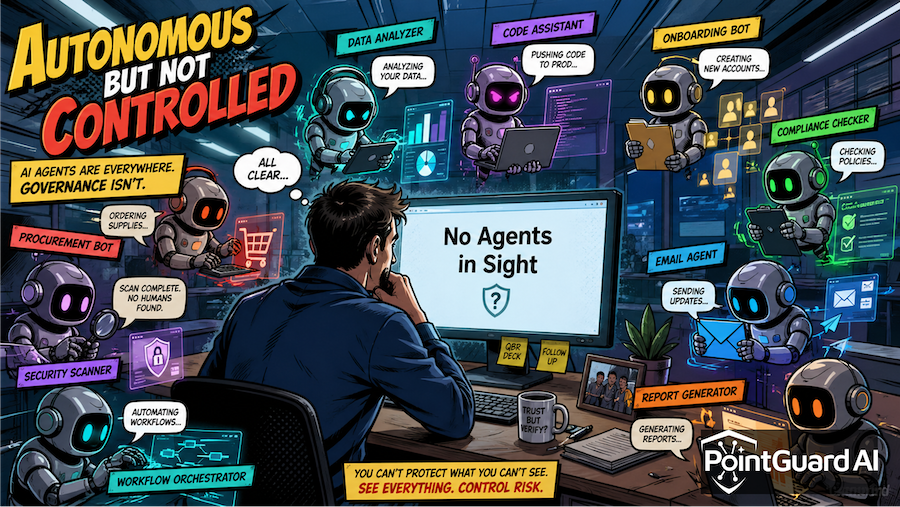

While human in the loop controls have value in certain scenarios, they are not a scalable foundation for security. AI agents are designed to automate tasks. Introducing manual checkpoints for routine operations creates friction and increases alert volume, making it harder for security teams to focus on real threats.

This approach also shifts responsibility away from system design and toward the customer. Instead of building safer defaults, the burden is placed on organizations to compensate through oversight. This weakens the concept of shared responsibility and turns it into responsibility deflection.

In another incident reported by ITPro, security researchers found structural issues with with the MCP protocol. The initial response from Anthropic (the original creator of MCP) emphasized that secure implementation was the responsibility of developers rather than the protocol designers. This position overlooks the interconnected nature of AI ecosystems, where decisions made at one layer directly affect security outcomes at another.

Responsibility Is Outpacing Accountability

AI shared responsibility is being defined in real time. Providers are still determining how much responsibility they will accept. Enterprises often assume platforms include more built-in protection than they actually do. Developers continue to assemble models, APIs, agents, and tools without a consistent security framework governing how they interact.

The result is a growing gap between responsibility and accountability. Many emerging models suggest that a majority of responsibility lies with providers or shared domains. In practice, providers are unlikely to fully accept that level of ownership without regulatory or market pressure.

Until standards mature, organizations must assume that responsibility is fragmented and evolving. That requires a more cautious and proactive approach.

What Organizations Should Do Now

Organizations cannot afford to wait for consensus. The pace of AI adoption and the scale of potential risk make delay impractical.

A practical approach starts with acknowledging that responsibility boundaries are unclear. Security teams should validate controls across the entire AI stack rather than focusing only on the model layer. AI systems should be treated as ecosystems, where risks emerge from interactions between components.

It is also important to limit reliance on manual controls such as human in the loop for core security enforcement. While useful in specific high-risk scenarios, these controls are not sufficient as a primary defense. Continuous monitoring of AI behavior, inputs, and outputs is essential to detect anomalies and prevent misuse.

Most importantly, organizations must recognize that deploying AI introduces new security and governance obligations, regardless of how responsibility is formally defined.

How PointGuard AI Can Help

PointGuard AI addresses the gaps created by today’s unclear and evolving shared responsibility landscape. Instead of relying on assumptions about what providers secure and what customers must handle, it delivers visibility and protection across the full AI application stack.

PointGuard AI continuously monitors AI interactions, including prompts, responses, and agent actions. This enables organizations to detect prompt injection attacks, data leakage, and abnormal behavior in real time. Security policies can be enforced at runtime, reducing reliance on manual intervention while maintaining operational efficiency.

The platform also protects sensitive data as it moves through models, tools, and integrations, ensuring exposure risks are mitigated regardless of where responsibility technically resides. It extends protection to agent driven workflows, including tool usage and external actions, where traditional controls are often limited.

With unified visibility across AI components, security teams can better understand and manage risk in complex environments involving multiple providers and technologies.

Until the industry reaches clear and enforceable standards, organizations must take ownership of their AI security posture. PointGuard AI provides the control and visibility needed to operate safely in an environment where responsibility is still being defined.