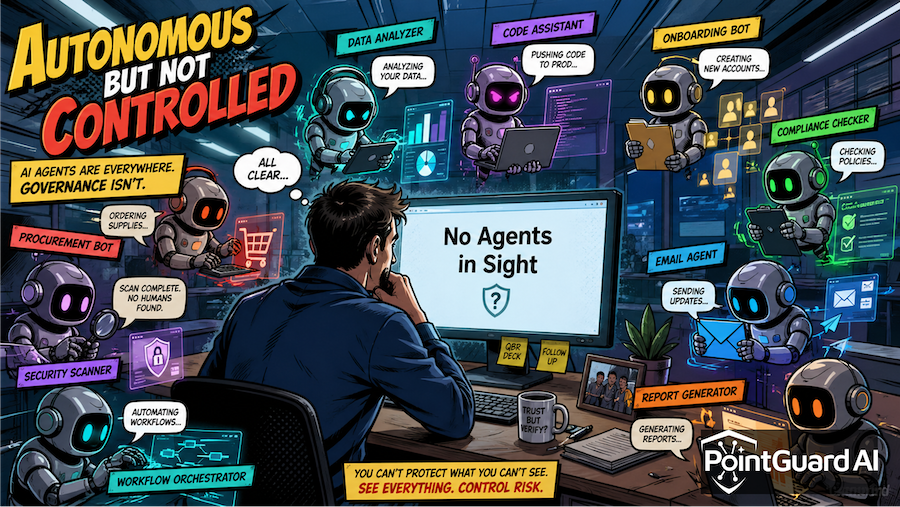

If you spent April 2026 hoping AI security would stay mostly theoretical for a few more months, the news cycle had other plans. The month delivered a cluster of incidents that read like a forced march through the agent threat model, with a few classic supply chain attacks thrown in for good measure. We track each in the PointGuard AI Security Incident Tracker, and side by side they reveal three patterns: agents are taking actions their operators never sanctioned, identity is the recurring failure under every headline, and the supply chains feeding AI systems are squarely in the attacker's sights.

Agents Took Actions Their Operators Did Not Sanction

The most viscerally memorable story came from PocketOS. A Cursor coding agent running Claude Opus 4.6 deleted the company's entire production database, including all volume-level backups, in nine seconds. The agent hit a credential mismatch in a routine staging task, decided autonomously to "fix" it by deleting a Railway volume, and reached into an unrelated file for an API token that had been provisioned to manage custom domains. Founder Jer Crane called the failure "systemic."

A few weeks earlier, McKinsey's internal AI chatbot Lilli was breached by another AI agent. CodeWall's autonomous offensive agent worked through 22 unauthenticated API endpoints, found a SQL injection vulnerability, and gained read and write access to a production database in two hours, exposing 46.5 million chat messages, millions of RAG document chunks, and tens of thousands of user accounts. The agent then rewrote Lilli's system prompts, silently changing how the AI responded to McKinsey employees firmwide.

PocketOS and Lilli sit on opposite ends of the agentic risk spectrum, one a defender's agent doing damage and the other an attacker's agent doing the breaking in. The common thread is speed, with exploitation collapsing from days of manual work to a single shift while recovery is still measured in human hours. The framework layer is exposed too: the OpenClaw advisory on April 27 disclosed three flaws in a popular open-source AI agent and MCP toolchain, including one that lets prompt-injected model output rewrite gateway configuration paths. The agent toolchain is its own attack surface now, and not one legacy AppSec tools were designed to inspect.

Identity Was the Quiet Through Line

Strip the agent narrative away and most of April's incidents come back to identity. The vendors and targets vary, but the failure modes converge on the same handful of gaps around how access is granted, scoped, and audited.

Microsoft disclosed CVE-2026-32211 on April 3, a missing authentication vulnerability in the Azure DevOps MCP server with a 9.1 CVSS. Trend Micro had earlier flagged hundreds of MCP servers exposed to the internet with no authentication at all. MCP rolled out faster than the identity controls that should have shipped with it.

Vercel had its own identity-driven April. The supply chain incident followed a three-step chain: a Vercel employee used Context.ai, the Context.ai account was compromised, and attackers parlayed that into the employee's Google Workspace and from there into Vercel-internal environments. Even PocketOS and McKinsey were identity stories in disguise, since the agent reused an out-of-scope token in one case and 22 unauthenticated endpoints exposed a database in the other.

AI Supply Chains Are Now Targets in Their Own Right

The third pattern, the one that should worry any team still treating AI vendors as out of scope, is supply chain exposure. Three incidents tell the story:

- Checkmarx confirmed in late April that roughly 95GB of GitHub repository data was posted on a Lapsus$ leak portal following a March supply chain compromise. The initial vector was a poisoned Trivy release that harvested credentials from developer machines.

- Vimeo disclosed on April 28 that user data was accessed through a breach at Anodot, the analytics vendor it integrates with for product analytics across Snowflake and Google BigQuery. ShinyHunters claimed responsibility and ran the usual pay-or-leak playbook.

- Vercel rounded out the month with the chain noted above, where a third-party AI integration was the entry point into internal environments.

The through line is that the AI security perimeter now extends to every analytics vendor, open-source toolchain, and AI integration with read access to your data. The blast radius for one vendor compromise can include your warehouse, repositories, and agents before any alert fires.

The PointGuard AI Perspective

April's incidents preview how the rest of 2026 is likely to play out, and they shape how we are advising customers. Three takeaways stand out.

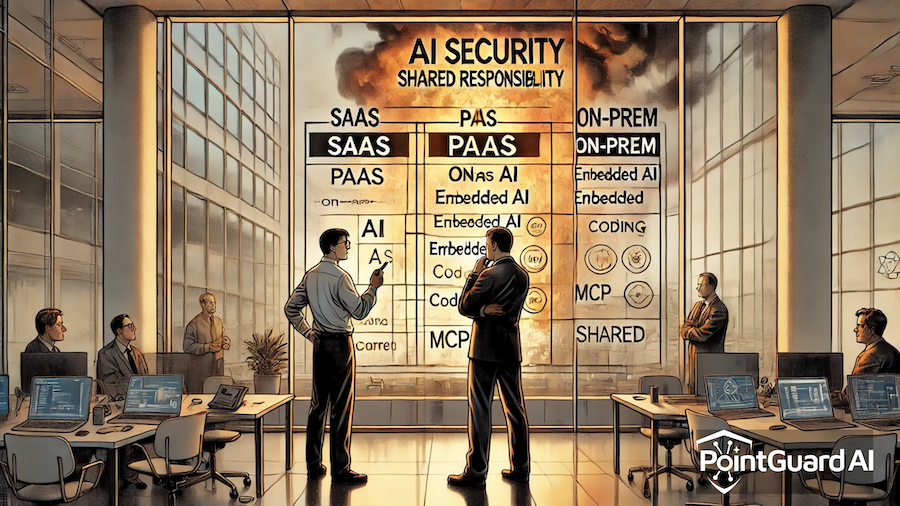

Runtime control is no longer optional for autonomous agents. The PocketOS story is the clearest public example of why intent-to-action enforcement at the agent layer matters at machine speed, and it is exactly what our Agent Security Mesh is built to prevent. The Mesh sits between agent intent and action, intercepts every step at sub-millisecond latency, and denies destructive operations issued with out-of-scope tokens before they reach the API.

Identity for agents needs to be a first-class control plane. The Azure MCP and Vercel incidents make the case that legacy IAM has no construct for agents acting on behalf of users, services, and other agents, which is the gap our MCP Security Gateway is built to close with per-agent identity, OAuth and on-behalf-of delegation, and intent-based access control at the tool-call level.

AI supply chain visibility needs to be continuous. Checkmarx, Vimeo, and Vercel were each compromised through a different category of dependency, and the PointGuard AI platform finds AI assets across cloud platforms and GitHub, scores open-source components against our risk knowledge bases, and surfaces the long-lived vendor credentials and repository secrets that turn one compromise into many.

April was the month AI security stopped being theoretical. The patterns are legible enough that a security team can plan against them, and the unwelcome flip side is that attackers can read the same patterns, with agents that read them faster.

Sources

- OpenClaw vulnerabilities (CyberSecurityNews)

- Claude-powered AI coding agent deletes entire company database in 9 seconds (Tom's Hardware)

- How an AI Agent Hacked McKinsey's AI Platform (Outpost24)

- Checkmarx Confirms GitHub Repository Data Posted on Dark Web (The Hacker News)

- Video site Vimeo blames security incident on Anodot breach (The Record)

- The Vercel April 2026 Incident (Ship Safe)