Gartner analyst Dennis Xu is one of the more credible voices shaping how enterprises think about AI security platforms, so when he half-jokingly suggested banning Microsoft Copilot on Friday afternoons, it is worth paying attention. His comment, reported by The Register, reflects a growing concern that many organizations are quietly grappling with: AI tools are being trusted faster than they can be secured.

What sounds like a light remark is really a signal of something deeper. It highlights the widening gap between adoption and control, and the uncomfortable reality that today’s AI assistants are being used in environments where mistakes carry real consequences.

The Problem Isn’t Just Accuracy

At the core of Xu’s observation is a fundamental issue. Tools like Copilot cannot be relied upon to consistently produce accurate and safe outputs without human review. In isolation, that might seem manageable. In practice, it quickly becomes unsustainable.

Teams are now generating code, documents, and decisions at a pace that assumes automation is trustworthy. The expectation that every output will be carefully validated creates friction that undermines productivity. Over time, review fatigue sets in, attention drops, and risk increases. The very scale that makes AI valuable is what makes human oversight insufficient.

Even when outputs are technically correct, another risk emerges. AI assistants make it extremely easy to expose sensitive information. The experience feels similar to sending an email to the wrong recipient due to auto-fill, but the consequences are amplified. AI systems can pull in internal data, summarize it, and surface it in ways that were never intended. When users are moving quickly, these small mistakes can escalate into enterprise-wide data exposure.

Human Vigilance Does Not Scale

The idea of avoiding AI use at the end of the week resonates because it reflects how people behave under pressure. When deadlines approach, attention narrows and shortcuts are taken. However, positioning the solution as “be more careful” misses the point.

Security has never been solved through perfect user behavior. Mistakes are inevitable, especially in high-velocity environments. AI only increases that velocity. If the safety model depends on users catching every issue, it is already broken.

This is a familiar lesson in cybersecurity. Phishing, misconfigurations, and accidental data exposure persist not because users are careless, but because systems are not designed to account for human fallibility. The same principle now applies to AI.

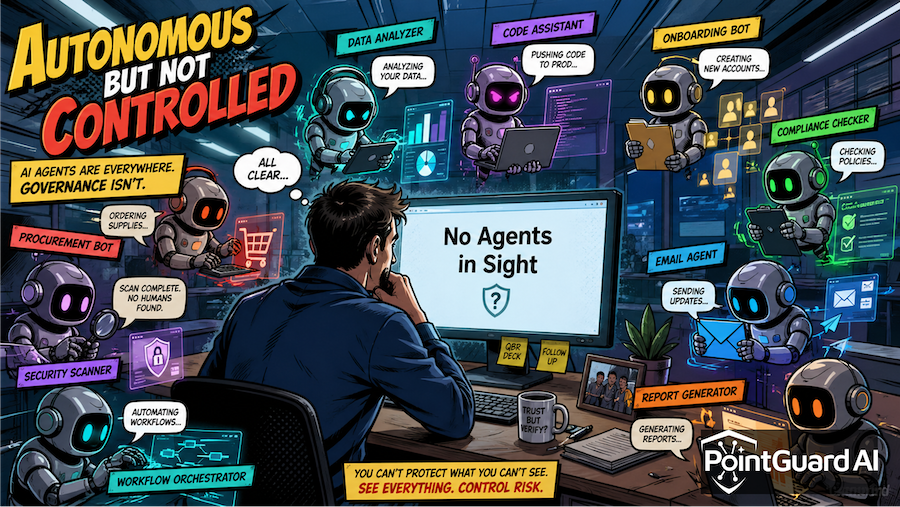

AI Systems Are Becoming Critical Infrastructure

AI assistants and agents are no longer isolated tools. They are becoming deeply embedded in enterprise systems, connected to sensitive data, and increasingly capable of taking action on behalf of users. This combination of access and autonomy changes their risk profile entirely.

These systems should be treated as critical infrastructure. Their role is closer to identity systems or cloud control planes than to traditional productivity software. They mediate access to data, influence decisions, and in some cases execute actions directly.

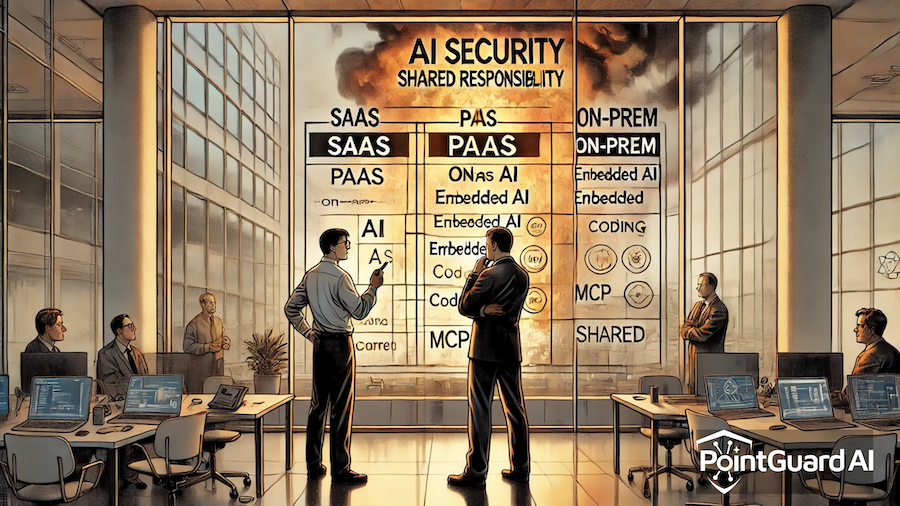

Securing them requires a shift in mindset. It is not enough to secure endpoints or monitor outputs. Organizations need comprehensive protection across the entire AI lifecycle, including how data is accessed, how prompts are constructed, how outputs are generated, and how actions are executed.

Real Incidents Are Already Happening

This is no longer a theoretical concern. AI-related security incidents are being reported with increasing frequency, and they illustrate how these risks materialize in real environments.

The PointGuard AI Security Incident Tracker documents a range of examples that highlight the scope of the problem:

https://www.pointguardai.com/ai-security-incident-tracker

Prompt injection attacks have shown how malicious instructions can be embedded in external content and then executed by AI systems with access to internal tools. Data leakage incidents have demonstrated how sensitive information can be exposed through seemingly routine queries. Vulnerabilities in AI-assisted coding tools have raised concerns about introducing exploitable flaws at scale. At the same time, supply chain risks are emerging as organizations rely on external models, plugins, and MCP servers that may not be fully trusted.

What connects these incidents is not just the technology, but the lack of consistent control. AI systems operate across boundaries that traditional security tools were never designed to manage. They interact with multiple systems, invoke external services, and make decisions in ways that are difficult to monitor in real time.

The Need for Control at the Interaction Layer

Addressing these risks requires more than reactive defenses. It requires control to be embedded directly into how AI systems operate.

This means enforcing zero-trust principles for every interaction, validating inputs and outputs in real time, and ensuring that access to data and tools is governed by context. Instead of assuming that requests are safe, systems must continuously verify and enforce policy.

This approach shifts security from a downstream activity to an integral part of the AI execution layer. It reduces reliance on user vigilance and creates a framework where both expected and unexpected behaviors can be managed.

How PointGuard AI Can Help

PointGuard AI is designed around this exact challenge. It treats the agentic ecosystem as a unified surface that requires consistent, end-to-end protection rather than fragmented controls.

At the center of the platform is the MCP Security Gateway, which introduces a new level of control over how AI agents interact with data, MCP servers, and connected tools. The gateway enforces zero-trust authorization for every action, ensuring that agents only access what they are explicitly permitted to use. It also applies policy enforcement in real time, allowing organizations to define and enforce rules around data usage, prompt behavior, and output handling.

By mediating interactions across the agentic stack, the MCP Security Gateway reduces the risk of over-permissioned access, prevents unintended data exposure, and ensures that AI-driven actions align with enterprise policies. It creates a controlled execution environment where security is not dependent on perfect user behavior.

Beyond the gateway, the broader Agentic Security Platform provides visibility into AI usage, monitors runtime activity, and enforces governance across the full lifecycle. This holistic approach is essential because risks do not exist in isolation. They emerge from the interactions between agents, data, and systems.

The Bottom Line

Dennis Xu’s comment may have been delivered with humor, but it reflects a serious and growing issue. AI adoption is accelerating, while the controls needed to secure it are still catching up.

Organizations cannot rely on users to slow down or catch every mistake. They need systems that assume risk, manage it continuously, and enforce control at the point where AI interacts with the enterprise.

The future of AI security will not be defined by better prompts or more careful reviews. It will be defined by how effectively organizations can govern the systems that are now acting on their behalf.