When cybersecurity incidents make headlines, the story usually involves stolen customer data, ransomware attacks, or corporate secrets leaking onto the internet. A recent incident involving Google’s Gemini API highlights a different type of risk that is emerging alongside the rapid adoption of generative AI, and it is the kind of risk that can cripple a small team without ever touching a single customer record.

In this case, the damage was not a data breach. It was a bill.

As reported by The Register, a developer found that a stolen Gemini API key had been used to generate more than $82,000 in usage charges in roughly 48 hours, which is a staggering jump from a normal monthly spend that hovered around a couple hundred dollars. Additional coverage from Tom’s Hardware and TechSpot underscored the same central point: once an attacker has a valid credential for an AI service, they can convert that credential into compute consumption at machine speed.

While this incident does not involve the loss of sensitive corporate data, it illustrates something equally important about modern AI infrastructure. AI automation dramatically increases the financial consequences of credential theft, because the attacker is no longer limited by human effort, manual workflows, or slow operational constraints.

AI APIs Turn Credentials Into Financial Targets

API keys have always been sensitive credentials, but generative AI services have changed the economic incentives around stealing them in ways many teams have not fully internalized. AI APIs allow developers to generate text, images, code, and other outputs programmatically using large cloud-hosted models, and these services are typically billed according to usage, measured in tokens, compute cycles, or request volume.

Under normal conditions, this model works well because developers pay only for the AI workloads they actually run. The problem arises when an attacker obtains access to an API key and begins issuing automated requests at scale, because generative AI platforms are designed to handle high throughput by default. Once automation is introduced, “a lot of requests” stops being an abstract concern and becomes a simple script loop that can run all night without getting tired, slowing down, or second-guessing whether the costs make sense.

In the Gemini case described in the reporting, the attacker did not need to exploit a software vulnerability or defeat sophisticated defenses. They simply used the service as intended, and the billing system faithfully recorded every request.

The Rise of an AI Compute Black Market

Incidents like this may soon become more common, and the reason has as much to do with economics as it does with cybersecurity tactics. Hackers are increasingly using AI tools to generate malware, automate phishing campaigns, produce convincing social engineering content, and accelerate vulnerability discovery, but running large-scale AI workloads can be expensive. Commercial AI APIs cost money, and private inference infrastructure costs even more once you include hardware, hosting, orchestration, and ongoing maintenance.

That cost pressure creates an incentive to steal compute resources, and it is not hard to imagine how this evolves. Just as cybercriminals once focused on stealing cloud infrastructure to mine cryptocurrency, we should expect a thriving underground economy around stolen AI API keys and stolen AI compute bandwidth, because those credentials effectively become pre-funded access tokens to powerful model capabilities.

Attackers already scan public repositories, config files, and developer environments looking for exposed secrets, and AI credentials are especially attractive because misuse converts directly into billable activity. From the attacker’s perspective, it is a tidy arrangement: they get the AI capability, the victim pays the bill, and the provider sees a stream of “valid” requests.

Recovering the Money May Not Be Easy

Even if teams detect credential abuse quickly, recovering the financial damage can be difficult, and this is where the incident becomes especially instructive for anyone building with AI. Most cloud providers operate under a shared responsibility model in which customers are responsible for securing credentials and controlling service usage. When an API key is abused, providers may not automatically refund charges, particularly when the requests appear technically valid and properly authenticated.

Proving that you did not authorize that usage can be a messy exercise that involves logs, timelines, support escalation, and often a debate about whether guardrails were configured appropriately. In practical terms, this means many organizations may find themselves trying to explain why an attacker’s automated workload should not count as their own, which can become a painful argument when the platform’s billing systems did exactly what they were built to do.

All of that adds up to a simple conclusion: if your AI credentials are compromised, the path back to “making it right” may be slow, uncertain, and operationally exhausting.

AI Guardrails Are Becoming a Core Security Control

The Gemini API abuse story highlights a shift that security teams should take seriously. AI security is not only about protecting training data, defending against prompt injection, or securing model pipelines, because AI infrastructure itself has become a financial attack surface. Generative AI workloads are compute-intensive by design, which means every inference request consumes resources that translate directly into cost, and automation makes it easy for attackers to consume those resources at a breathtaking pace.

This is why guardrails matter, and why they should be treated as essential controls rather than optional configuration. Usage quotas, rate limits, budget thresholds, and anomaly detection mechanisms are often the difference between a contained incident and a catastrophic bill, particularly when a service can be driven programmatically at high speed.

If a system usually generates a few hundred AI requests per day, then a sudden spike to tens of thousands should not merely trigger an alert; it should trigger automated containment.

How PointGuard AI Can Help

As organizations expand their use of generative AI, they need security capabilities designed for the realities of AI infrastructure, including the risk of credential abuse and runaway compute consumption. PointGuard AI helps organizations secure AI environments by providing visibility and guardrails across the AI application stack, which is increasingly necessary as AI services spread across development teams, products, and third-party integrations.

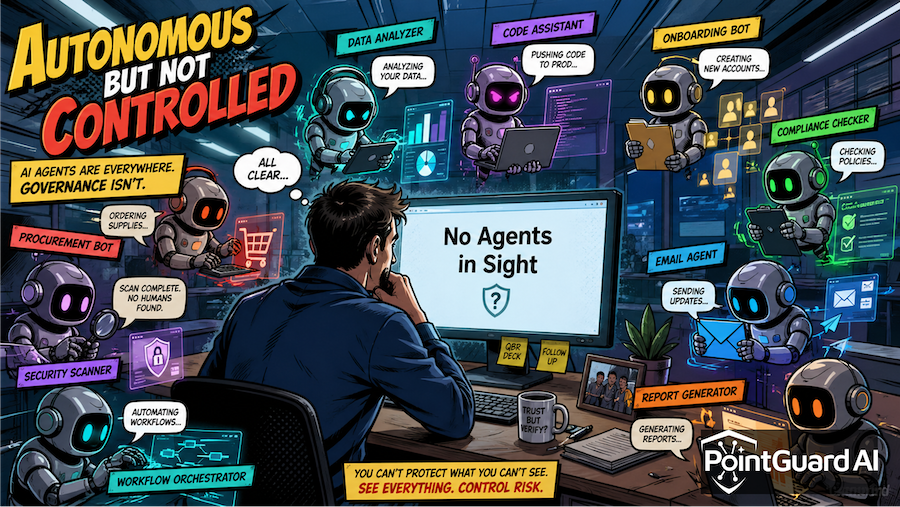

PointGuard AI supports organizations by making AI usage and AI dependencies visible, which helps teams understand where models, APIs, and external AI services are integrated and where sensitive credentials may be exposed. That visibility matters because you cannot protect what you cannot see, and AI adoption has a habit of proliferating faster than governance programs can keep up.

PointGuard AI also helps by enabling continuous monitoring for anomalies in AI activity, including sudden spikes in inference requests, abnormal token consumption patterns, or unusual access behavior that may indicate a compromised key. When those signals are detected early, teams can revoke credentials, shut down suspicious workloads, and prevent costs from escalating into five- or six-figure territory.

Taken together, this is why incidents like the Gemini billing shock should be read as more than an odd cautionary tale. In the age of AI automation, the most damaging incident may not be the one that steals your data, because it might be the one that quietly converts your credentials into someone else’s compute budget until the invoice arrives.