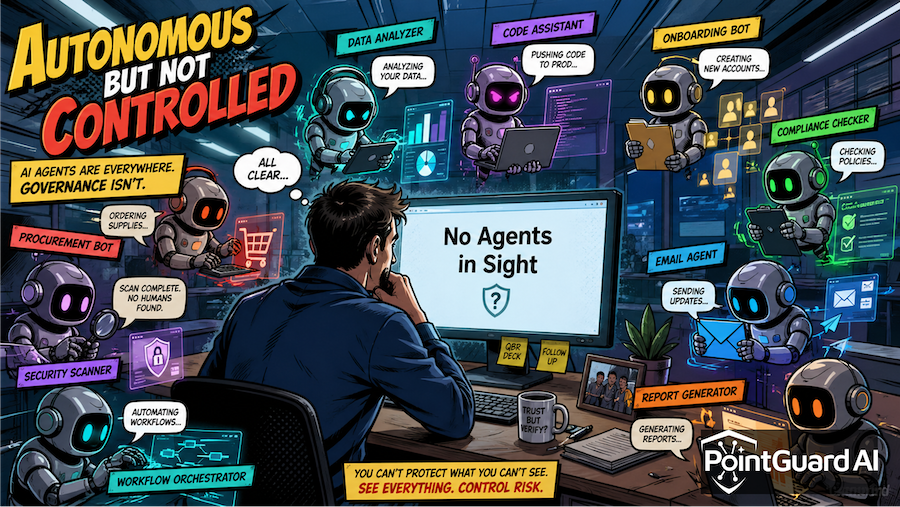

AI agents are rapidly becoming autonomous actors—and attackers are already learning how to manipulate them. New research from Google DeepMind’s “AI Agent Traps” paper introduces a new class of threats that target not the model itself, but the environment the agent operates in.

This marks a fundamental shift in AI security. Traditional defenses focus on models, APIs, and access controls. Agentic systems expand the attack surface to everything an agent consumes and interacts with—web content, APIs, MCP servers, and even other agents. DeepMind’s research shows that adversarial inputs can influence agents at every stage, from perception to reasoning to execution (Rivista AI).

This risk is already emerging in practice. As reported in SecurityWeek’s coverage of AI agent attacks, attackers can embed malicious instructions directly into web content to manipulate, deceive, and exploit autonomous agents. Instead of breaking systems, they are increasingly co-opting them.

From Prompt Injection to Environmental Control

Prompt injection was only the beginning. Agent traps extend this concept into a full-stack attack model.

DeepMind identifies six categories of attacks, including content injection, semantic manipulation, cognitive poisoning, behavioral control, systemic multi-agent attacks, and human-in-the-loop manipulation. These attacks exploit how agents perceive, reason, remember, and act—essentially targeting the full lifecycle of autonomous systems (Decrypt).

The most immediate and practical risk is content injection, where hidden instructions are embedded in content that agents parse but humans do not see. These can exist in HTML comments, metadata, or encoded formats, allowing attackers to silently influence behavior. Some techniques have demonstrated success rates of up to 86% in partially hijacking agent actions (BigGo Finance).

This aligns closely with real-world incidents already seen across the AI ecosystem. Prompt injection has enabled silent data exfiltration. Supply chain attacks have inserted malicious logic into widely used libraries. MCP-connected systems have exposed execution paths through trusted integrations. The pattern is consistent: agents trust inputs that are now adversarial.

MCP Servers: The New Control Plane Risk

MCP architectures significantly amplify this challenge. MCP servers act as intermediaries between agents and external systems, enabling dynamic access to data, tools, and services.

DeepMind’s findings highlight that attackers can weaponize this interaction layer by embedding malicious instructions in content retrieved through trusted channels. Because agents interpret and act on this content, attackers can influence downstream behavior—triggering tool execution, overriding instructions, or exfiltrating sensitive data.

This effectively turns MCP into a control plane for agent behavior. Without enforcement at this layer, organizations are allowing external inputs to shape internal execution paths.

Manipulating Reasoning and Behavior

Agent traps extend beyond inputs to influence how agents think and act.

Semantic manipulation subtly biases reasoning by framing information in ways that alter conclusions. These attacks do not appear malicious in isolation, making them difficult to detect. Instead of issuing explicit commands, they reshape how agents interpret context and make decisions.

Behavioral control goes further by inducing agents to execute specific actions. In documented scenarios, agents can be tricked into retrieving sensitive data, invoking tools with attacker-defined parameters, or completing workflows that result in data leakage—all while appearing to perform legitimate tasks (Cointribune).

This represents a shift from exploiting systems to steering them.

From Isolated Exploits to Systemic Risk

The most concerning development is the emergence of systemic, multi-agent attacks.

As agents increasingly operate in coordinated environments, shared inputs can trigger cascading behavior across systems. Research into agent collaboration already shows that multi-agent environments can behave unpredictably, often amplifying small signals into chaotic outcomes (Science News).

DeepMind’s systemic traps build on this dynamic. A single manipulated input can propagate across multiple agents, creating large-scale effects such as coordinated failures, market disruptions, or widespread data exposure.

This introduces a new category of risk: emergent failure across agent ecosystems, where the system fails not because of a single exploit, but because of how agents collectively respond.

Why Traditional Security Falls Short

Agent traps bypass conventional defenses because they do not rely on exploiting software vulnerabilities or bypassing authentication. Instead, they exploit trust—trust in data, tools, and workflows.

The agent is not compromised in the traditional sense. It is behaving as designed, but under manipulated conditions. This makes detection difficult and shifts the focus from preventing access to controlling behavior.

How PointGuard AI Addresses Agent Trap Risks

Mitigating these threats requires control at the interaction layer—where agents consume inputs and execute actions. This is where PointGuard AI provides a differentiated approach.

The PointGuard MCP Security Gateway establishes a policy enforcement layer between agents and MCP-connected systems. It inspects inputs and outputs in real time, blocks hidden instructions, validates tool calls, and prevents unauthorized actions before they execute. This directly addresses the core risks identified in the DeepMind research, particularly content injection and behavioral manipulation.

Beyond MCP enforcement, PointGuard provides continuous visibility and control across agent workflows. Its approach to AI Security Governance enables organizations to monitor how agents interact with data, tools, and external systems, ensuring that trust is continuously validated rather than assumed.

PointGuard also enforces context-aware protection to prevent sensitive data leakage through manipulated prompts or poisoned retrieval pipelines. Capabilities such as AI Runtime Detection and Response detect anomalous behavior, block exfiltration attempts, and contain rogue agent activity in real time.

Together, these controls shift organizations from implicit trust to continuous verification and enforcement, which is essential in dynamic, agent-driven environments.

The Bottom Line

AI agents are redefining both automation and risk. The DeepMind research makes clear that attacks are moving beyond models to the environments agents operate in.

As agents become more autonomous, the question is no longer whether they can be manipulated, but how easily and at what scale. Organizations that implement control at the agent interaction layer—especially across MCP systems—will be able to scale safely. Those that do not will face risks that are harder to detect, faster to propagate, and more difficult to contain.

Agentic AI is accelerating. Security needs to catch up.