It’s just the first day of RSA Conference 2026, and a clear pattern has already emerged across the Cloud Security Alliance Summit. Nearly every session is centered on Agentic AI, the unprecedented rate of change it is driving, and the dramatic expansion of risk that comes with it. What’s equally clear is that traditional security approaches—built for human-scale threats and response times—are no longer sufficient.

Three presentations in particular captured this shift from different angles:

- Caleb Sima, Chair, AI Safety Initiative, Cloud Security Alliance

- Kavitha Mariappan, Chief Transformation Officer, Rubrik

- Jim Bowie, CISA, Tampa General Hospital

Taken together, they tell a consistent story. The threat landscape is accelerating beyond human capability, the attack surface is expanding in ways organizations don’t fully understand, and the only viable path forward is to rethink security as an AI-native discipline. As one speaker put it succinctly, “Your job is to automate your job.” Another reframed the entire paradigm: “AI is not software. It’s a new workforce.”

Detection at Human Speed in a Machine-Speed World

Caleb Sima’s presentation set the tone by challenging one of the foundational assumptions of modern cybersecurity: that detection and response, as currently designed, are effective.

He began with a scenario every security leader recognizes—the middle-of-the-night breach, the frantic coordination, the long recovery, and the inevitable postmortem question: why didn’t we detect this sooner? His conclusion was not that organizations need better tools, but that the model itself is flawed. Detection has remained largely unchanged for decades, even as the environment it is meant to protect has transformed.

Most organizations operate with significant visibility gaps. Only a fraction of their attack surface is actively monitored, and even within that subset, detection coverage is incomplete. Logs do not equal detections, and detections do not always translate into actionable insight. Meanwhile, response teams are overwhelmed by volume, spending more time filtering false positives than stopping real threats.

The result is a system that moves far too slowly. It can take months to create new detections and months more to identify breaches, even in well-resourced environments . This lag was already problematic when attackers operated at human speed. With AI-enabled adversaries, it becomes untenable.

Attackers are no longer probing environments sequentially. They are operating in parallel, deploying multiple agents that scan, exploit, and move laterally in minutes. The compression of time—from vulnerability discovery to exploitation—has fundamentally changed the nature of risk. In this environment, human-driven detection and response processes simply cannot keep up.

AI as a Workforce—and a Threat Multiplier

Kavitha Mariappan expanded on this shift by reframing how organizations should think about AI itself. Rather than viewing it as another layer of software, she described AI as a new workforce—autonomous, scalable, and deeply embedded in business operations.

This perspective highlights both the opportunity and the risk. Organizations are rapidly adopting agentic systems to automate workflows, accelerate development, and improve efficiency. The growth is exponential, with AI-driven applications moving from a negligible share of enterprise environments to a significant portion in a very short time .

At the same time, adversaries are leveraging the same capabilities. Many organizations now view AI-powered attackers as their most significant threat, and the volume and sophistication of attacks continue to increase . The challenge is compounded by the fact that these systems operate in ways that are difficult to fully understand or control.

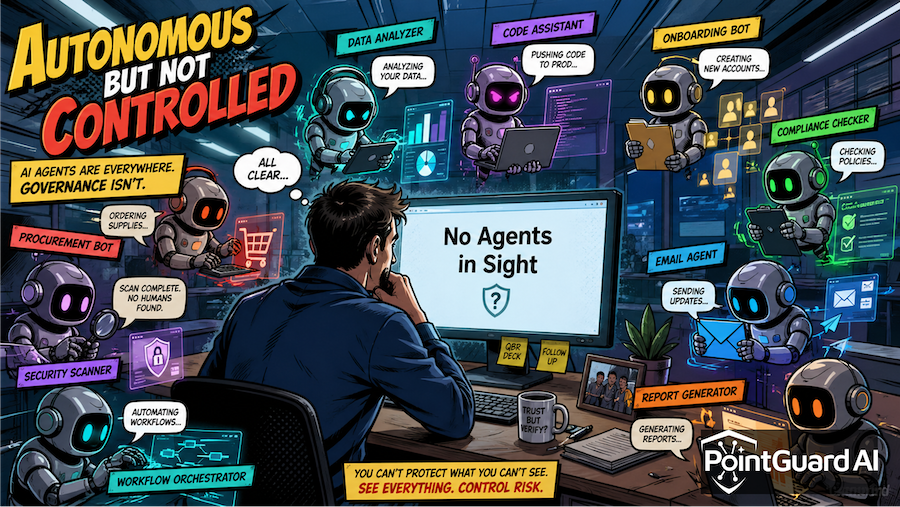

One of the most important shifts Mariappan highlighted is the rise of non-human identities. Machine identities, APIs, and autonomous agents now outnumber human users by a wide margin, each with its own permissions and potential vulnerabilities. These identities often carry excessive privileges and are not governed with the same rigor as human access, creating a rapidly expanding attack surface.

This leads to a critical insight: attackers are no longer breaking through perimeter defenses. They are leveraging valid credentials and existing access paths. In other words, they are logging in rather than breaking in.

At the same time, agentic systems introduce entirely new risk vectors, from prompt injection to memory poisoning to over-permissioned agents acting as unintended insiders. These risks are not theoretical; they are already appearing in production environments. Traditional security controls, designed for predictable systems and human behavior, are not equipped to detect or mitigate these threats.

The Reality of AI Adoption in Critical Environments

Jim Bowie’s perspective brought these challenges into a real-world operational context, particularly in healthcare. His experience underscores a critical point: AI adoption is not optional, and in many cases, it delivers tangible, life-saving benefits.

AI is already improving patient outcomes by reducing wait times, optimizing resource utilization, and alleviating administrative burdens on clinicians. Preventing its use is neither practical nor desirable. At the same time, the risks associated with ungoverned AI deployment are significant.

Bowie described the difficulty of maintaining visibility and control as AI adoption accelerates. Even with governance processes in place, organizations often underestimate the scale of deployment. Approved applications quickly multiply, as existing tools enable new capabilities and users introduce additional integrations without centralized oversight .

Traditional security processes cannot keep pace with this level of change. By the time a manual review is completed, new agents, identities, and connections have already been established. Attempting to slow adoption is not a viable strategy, as the business depends on these capabilities to operate effectively.

This creates a fundamental shift in the role of security. It is no longer about enforcing constraints or acting as a gatekeeper. Instead, security must enable rapid adoption while ensuring that appropriate controls are applied dynamically and continuously.

PointGuard AI Perspective: Securing the System—and the Context

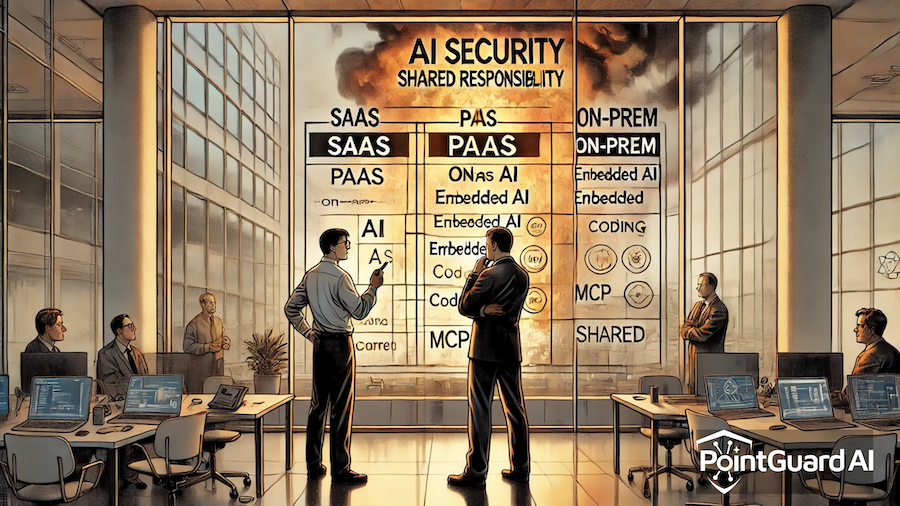

The consistent theme across these sessions is that security must evolve in two directions simultaneously. Organizations must use AI defensively to match the speed and scale of modern threats, while also securing the AI systems they are deploying.

At PointGuard AI, we believe this requires a fundamentally different approach—one grounded in context.

The core challenge highlighted throughout the summit is not just visibility, but understanding. Organizations lack a clear view of how assets, identities, and systems interact, particularly as AI introduces new layers of complexity. Without this context, detection produces noise, response becomes reactive, and governance struggles to keep pace.

Contextual, risk-based prioritization has long been a foundation of effective security, but it becomes critical in an AI-driven environment. Security decisions must be informed by an understanding of what matters most, how systems are connected, and how risks propagate across those connections.

This is why context is embedded throughout the PointGuard AI platform and central to our MCP Security Gateway. The Model Context Protocol (MCP) recognizes that modern systems operate through interconnected relationships, but many security tools fail to apply this principle in practice. They treat signals in isolation rather than as part of a broader system.

At PointGuard AI platform overview, you can see how this approach delivers full lifecycle protection across AI agents, models, MCP integrations, and application environments. The platform continuously monitors dependencies, enforces guardrails, and correlates risk across systems—ensuring that security decisions are grounded in real-world context.

For organizations looking to operationalize this further, AI Security & Governance solutions provide visibility into AI usage, enforce policy controls across agent interactions, and secure the entire AI lifecycle—from discovery through runtime protection. This is especially critical in environments where agentic systems operate autonomously and at scale.

The MCP Security Gateway builds on this foundation by enforcing context-aware security across AI interactions, providing visibility and governance over how models, agents, data, and services connect and operate. By focusing on relationships—not just individual components—it enables organizations to manage risk in a way that aligns with how modern AI environments actually function.

Ultimately, the shift described at RSA 2026 is not incremental. It is a fundamental change in how security must operate. As AI becomes both a defensive necessity and a core part of business infrastructure, organizations must move beyond human-speed processes and fragmented visibility.

They must adopt AI-driven defense, grounded in contextual understanding, to secure not only their environments but the AI systems that now power them.