A recent SC Magazine perspective, MCP is the backdoor your zero trust architecture forgot to close, highlights a critical and fast-emerging issue in enterprise AI security. The author is right to call out MCP as a blind spot. As organizations accelerate adoption of AI agents and copilots, MCP is becoming the execution layer that operates outside traditional security controls.

That gap is not theoretical. It is structural.

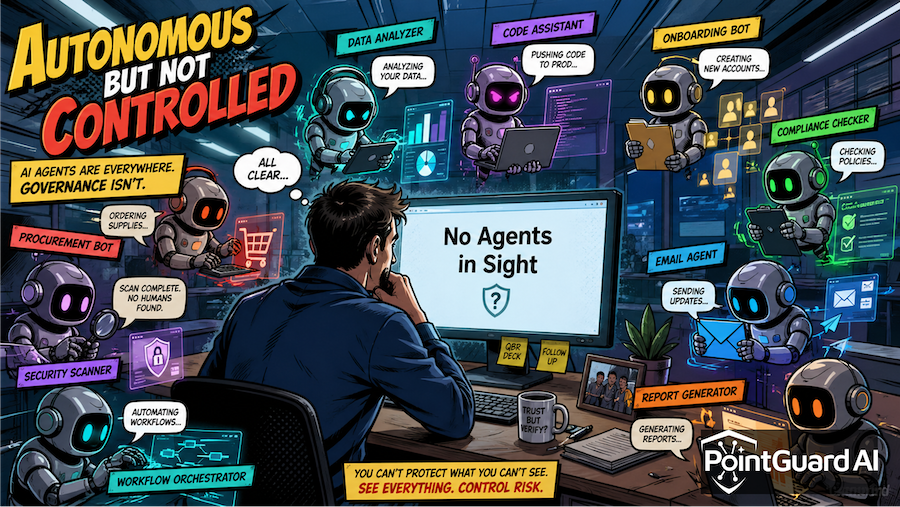

AI Agents Changed the Rules

Zero trust architectures were designed around users, devices, and applications. Identity became the foundation for access decisions, reinforced by context such as device posture and behavior. This model works when actions are initiated by humans or predictable systems.

AI agents do not follow that model. They continuously interpret context, discover tools, retrieve data, and execute actions across systems. They do not just request access. They decide what to do and then do it.

MCP accelerates this shift by standardizing how agents connect to tools and services. It removes friction and enables rapid integration, but it also creates a direct path from model reasoning to system execution. That path sits largely outside existing control points.

Zero trust governs access. MCP governs execution. That distinction is where the problem begins.

The MCP Control Gap

The SC Magazine article describes MCP as a backdoor because it bypasses the controls organizations rely on. When an agent invokes an MCP tool, the request is typically treated as trusted because it originates from an internal system or approved workflow.

There is little evaluation of whether the action itself is appropriate.

Security teams often lack visibility into which MCP servers are in use, what permissions they hold, or how they are being invoked. Agents can dynamically discover and interact with tools, expanding the attack surface in ways that are difficult to track.

This creates a new class of risk. Agents can invoke over-permissioned tools, interact with unverified services, and execute actions influenced by prompt injection or malicious input. Real-world incidents have already demonstrated how compromised MCP components can be used to exfiltrate sensitive data and manipulate workflows.

The issue is not just access. It is the absence of control at the moment decisions are executed.

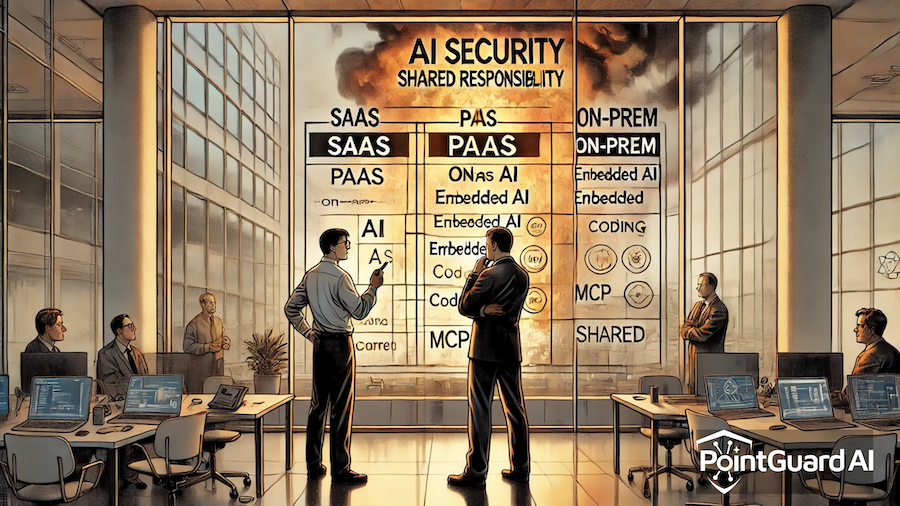

Why Existing Security Models Fall Short

Traditional IAM systems answer the question of who is making a request. In agentic environments, that answer becomes less meaningful because the request originates from an AI system acting on behalf of a user. Identity alone cannot determine whether an action is safe.

Zero trust architectures improve on this by enforcing least privilege and continuous verification, but they still focus on access rather than behavior. They validate whether an entity can reach a resource, not whether the action being taken makes sense.

Network controls and API gateways provide visibility into traffic, but they lack understanding of AI workflows. They cannot interpret why an agent is calling a tool or whether that action was influenced by malicious input.

This creates a fundamental gap. The security stack can authenticate requests, but it cannot judge whether those requests should happen at all.

Context Is the Missing Layer

Securing MCP requires moving beyond identity-centric controls to context-aware decision making. Every AI-driven action must be evaluated based on what is being done, why it is being done, and what the potential impact is.

Context includes data sensitivity, system criticality, user intent, and the broader workflow. It also includes understanding how a request was generated and whether it was influenced by external or untrusted input.

Without this level of context, policies either become too permissive or too restrictive. The goal is not to block AI. It is to enable it safely by making informed, real-time decisions.

Fixing MCP with a New Control Plane

This is exactly the challenge the PointGuard AI MCP Security Gateway is designed to address.

Instead of relying on controls that sit outside the AI workflow, the MCP Security Gateway operates directly within the agent-to-tool interaction layer. It becomes the enforcement point for all MCP activity, ensuring that every request is evaluated before execution.

The gateway provides visibility into agents, MCP servers, and connected tools, helping organizations understand how AI systems are interacting with their environment. This visibility is essential for identifying shadow AI and hidden risk.

It also introduces runtime guardrails that analyze requests and responses in real time. These guardrails detect prompt injection patterns, unsafe actions, and policy violations before they reach downstream systems.

Most importantly, the gateway enables contextual policy enforcement. Policies are dynamically applied based on business context, asset criticality, and operational requirements. This allows organizations to enforce practical controls that protect data without slowing down AI initiatives.

For a broader view of this approach, see the PointGuard AI Agentic Security Platform.

The Bottom Line

The SC Magazine article correctly identifies MCP as a critical gap in modern security architecture. It exposes the limits of traditional zero trust models in a world of autonomous AI systems.

Closing that gap requires more than extending existing controls. It requires a new approach that governs how AI systems behave at the moment actions are executed.

The MCP Security Gateway brings that control into the execution layer, combining visibility, runtime guardrails, and contextual policy enforcement.

As AI adoption accelerates, this will become foundational. Organizations that address it now will be able to scale AI securely. Those that do not will find that their most powerful systems are also their least controlled.

Sources

- SC Magazine: https://www.scworld.com/perspective/mcp-is-the-backdoor-your-zero-trust-architecture-forgot-to-close

- PointGuard AI MCP Security Gateway: https://www.pointguardai.com/mcp-security-gateway

- PointGuard AI Agentic Security Platform: https://www.pointguardai.com/agentic-ai-security