This week’s leak of Claude source code wasn’t the result of a sophisticated cyberattack or a novel exploit. It came from something far more familiar to every security team: a routine CI/CD mistake. Yet within hours, that mistake exposed the inner workings of one of the most advanced AI systems in the world, effectively handing over a blueprint that competitors and adversaries can now study.

That moment captures where we are today. AI security is no longer just about defending against attackers. It is about managing the speed, scale, and fragility of the systems we are rapidly building and deploying.

The Real Issue Isn’t Just Attacks. It’s Acceleration.

The Claude incident, which we analyzed here, is not alarming because of how it happened, but because of what it represents. A simple operational error instantly became a global AI security event. That kind of amplification is new, and it fundamentally changes how we need to think about risk.

AI systems accelerate everything. A small mistake can quickly become a large exposure. That exposure can propagate across environments, teams, and organizations, often before anyone realizes what has happened. What used to be a contained incident can now become systemic in a very short time.

This is the shift. The attack surface has not just expanded. It has become faster and more interconnected.

A Pattern, Not an Outlier

If the Claude leak were isolated, it would still be concerning. But it is part of a broader pattern that is unfolding in real time.

Over the past week alone, we have documented multiple high-severity incidents in the PointGuard AI Security Incident Tracker, including:

- Prompt injection vulnerabilities in ChatGPT enabling silent data exfiltration

- CrewAI framework flaws escalating prompt injection into code execution

- OpenAI Codex command injection exposing developer credentials

- A LiteLLM supply chain attack affecting downstream AI integrations

Taken together, along with dozens of recent incidents, these events make one thing clear. AI-related security incidents are increasing rapidly in both frequency and severity, and they are impacting every layer of the AI stack. This is no longer a future concern. It is happening now.

AI Not Only Creates Risks. It Amplifies Them.

One of the most important things to understand is that AI is not introducing entirely new categories of security problems. It is amplifying existing ones and exposing them in more impactful ways.

The Claude leak was rooted in a CI/CD misconfiguration. The Codex issue was a command injection problem. The LangChain vulnerabilities were classic injection and deserialization flaws. These are challenges the industry has dealt with for decades.

What has changed is the scale and speed of impact. In AI-driven systems, a prompt can become an execution path, a model can influence decisions across systems, and an agent can take actions that were once limited to humans.

In this environment, a simple mistake becomes a multiplier.

A Critical Moment for the Industry

At last week’s RSA Conference, Jim Reavis, CEO of the Cloud Security Alliance, captured this urgency when he said we need an “Agentic AI Manhattan Project.” That perspective reflects what many of us are seeing firsthand.

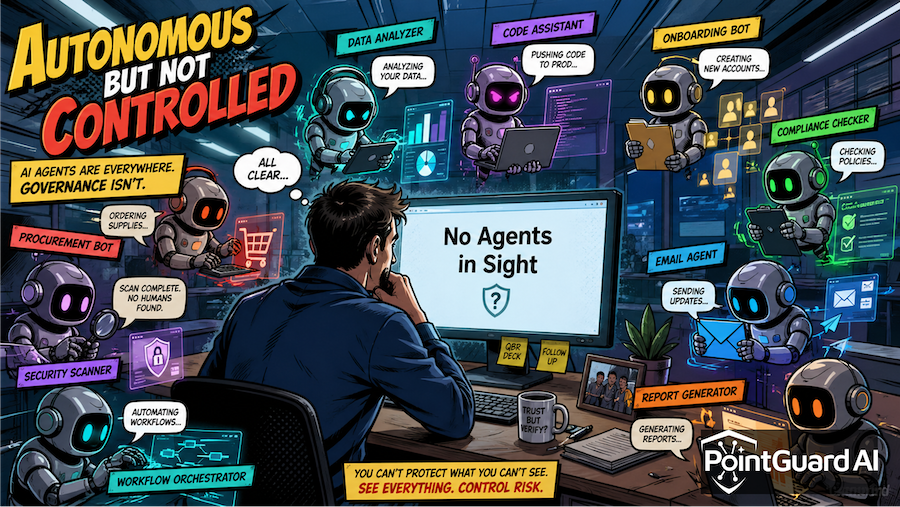

We have reached a point where incremental improvements are no longer enough. The pace of AI adoption, especially with the rise of agents and MCP-based architectures, is outpacing traditional security approaches. Organizations are deploying systems that can act, decide, and execute, often without equivalent controls in place.

In conversations with CISOs across industries, there is clear recognition of this challenge. The urgency is understood. The question is how quickly organizations can move to implement meaningful protections.

Moving From Awareness to Control

The encouraging reality is that these challenges are solvable. We already know how to secure systems that execute code, access sensitive data, and interact with external services. The gap is applying those principles effectively to AI-driven environments.

This is why we have extended our AI Security Platform to include an MCP Security Gateway. As AI agents become embedded in enterprise workflows, organizations need a way to re-establish control. The MCP Security Gateway enforces identity, access, and policy at the point where agents operate, ensuring that actions taken by AI systems are governed and monitored in real time.

This represents a shift from reactive security to proactive enforcement. Instead of relying on models to behave correctly, organizations can define and enforce how systems are allowed to behave.

Beyond Guardrails

There is a common misconception that guardrails alone can secure AI systems. While they are important, they are not sufficient. Prompt-based controls can be bypassed, and model alignment does not guarantee safe outcomes in adversarial conditions.

What is needed is a layered approach that combines guardrails with enforcement and visibility. This includes validating inputs, monitoring outputs, controlling access to data and tools, and ensuring that every action taken by an AI system is governed by policy.

Without these controls, organizations are placing trust in systems designed to be flexible and adaptive. That trust creates opportunities for both accidental and malicious misuse.

The Path Forward

AI represents one of the most transformative technologies of our time, and its potential is enormous. Realizing that potential requires a corresponding investment in security. Organizations must treat AI systems as critical infrastructure and protect them accordingly.

This means moving with urgency, deploying solutions that provide real control, and continuously evolving security practices as AI capabilities advance. It also means recognizing that the risks we face today are not temporary growing pains, but structural challenges that will persist as AI becomes more embedded in our operations.

The Claude leak was not just an isolated incident. It was a signal that the stakes have changed.

The window to get ahead of this is still open, but it is closing quickly.

At PointGuard AI, we are approaching this moment with both urgency and realism. We do not assume we can predict every emerging AI threat. The pace of innovation is too fast, and the landscape is evolving in ways no one can fully anticipate.

What we are committed to is building a security foundation that holds up under that uncertainty. That means combining proven security fundamentals with rapid AI innovation, delivering the visibility, control points, and guardrails enterprises need to protect critical AI systems as they scale.

Our goal is not just to respond to incidents, but to help organizations stay ahead of them. AI will continue to evolve, and so will the threats. But with the right foundation in place, these risks are manageable and often preventable.

The organizations that succeed will not slow down AI adoption. They will secure it and move forward with confidence.