The conversation around enterprise AI has changed rapidly in the past two years. What began as experimentation with generative AI tools is quickly evolving into something far more consequential: agentic AI. Instead of simply generating content, these systems are beginning to plan tasks, interact with applications, and execute workflows autonomously.

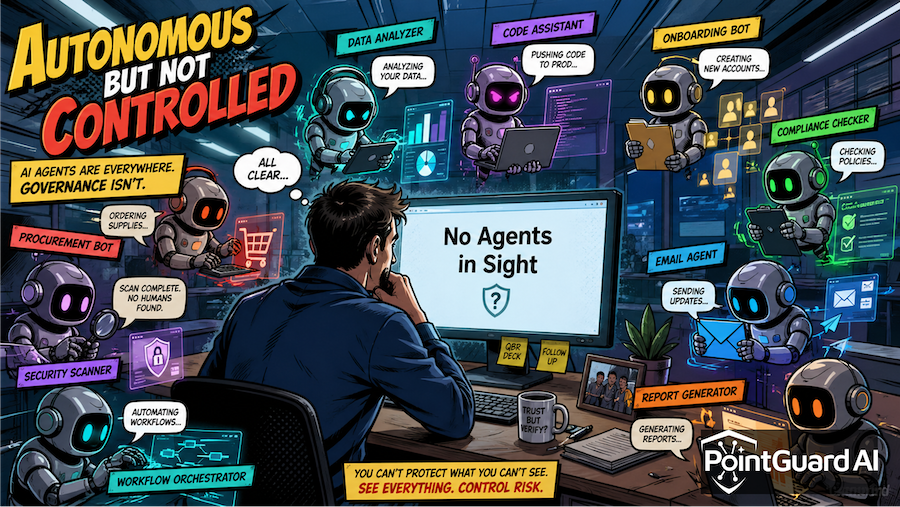

Industry analysts increasingly describe agentic AI as a new form of enterprise infrastructure. Autonomous agents are starting to coordinate workflows, access enterprise systems, and connect data sources across organizations. In this model, AI systems are no longer just tools used by humans. They become active participants in operational processes.

That shift fundamentally changes the risk profile of enterprise AI. If agentic AI becomes critical infrastructure, the security protecting it must also be treated as critical infrastructure.

From Generative Tools to Autonomous Systems

Generative AI systems primarily create text, images, or code in response to user prompts. Agentic AI systems go much further by interacting with software environments, making decisions, and executing tasks on behalf of users or organizations.

In enterprise environments, AI agents are already analyzing financial reports, scheduling actions across SaaS platforms, querying corporate data repositories, and triggering automated workflows. In effect, they function as digital workers embedded inside enterprise systems.

IDC describes this shift as the “agentic pivot,” where enterprise applications themselves begin operating with autonomy and initiative. Over the next several years, agentic AI is expected to transform how businesses operate, embedding itself across workflows and decision-making systems. (IDC)

Once agents start interacting with tools, APIs, and data systems across the organization, they resemble infrastructure components more than traditional software features. Like cloud platforms, identity systems, or data pipelines, agentic systems become a layer that other applications depend on.

When infrastructure fails or is compromised, the impact rarely remains contained. The same will be true for agentic AI.

A New Category of Security Risk

Many organizations initially approached AI security through the lens of model safety, focusing on issues such as prompt injection, hallucinations, or misuse of generative tools. While those concerns remain important, they represent only part of the emerging threat landscape.

Agentic systems introduce new types of risk because they can interact directly with enterprise systems. Instead of simply producing content, these systems can perform actions, access sensitive data, and trigger processes that affect real-world operations.

Several categories of risk are emerging as agentic deployments grow:

- Unauthorized access to enterprise APIs and applications

- Autonomous workflows triggering unintended actions

- Manipulation of system prompts or agent instructions

- Multi-agent interactions producing unpredictable behavior

- Exposure of sensitive enterprise data through agent access

In short, the core security challenge is no longer just about what AI generates. It is about what AI is allowed to do.

The more autonomy organizations grant to these systems, the more critical it becomes to enforce strong identity, authorization, and governance controls.

The McKinsey Incident Shows the Stakes

Recent incidents demonstrate that these risks are already becoming real.

Researchers recently demonstrated vulnerabilities in McKinsey’s internal AI platform that exposed tens of millions of chatbot messages and hundreds of thousands of internal document references. The platform served as a central hub for employees interacting with internal data and knowledge systems.

The breach illustrates how enterprise AI deployments can become high-value targets. When AI platforms aggregate corporate knowledge, integrate with internal systems, and provide broad access to data, vulnerabilities can expose large volumes of sensitive information.

Our AI Security Incident Tracker documents the case in detail here:

https://www.pointguardai.com/ai-security-incidents/mckinsey-ai-chatbot-breach-exposes-millions-of-internal-messages

The incident highlights how AI platforms increasingly act as operational hubs for enterprise knowledge and workflows, dramatically increasing the impact of security failures.

Infrastructure Requires Infrastructure-Grade Security

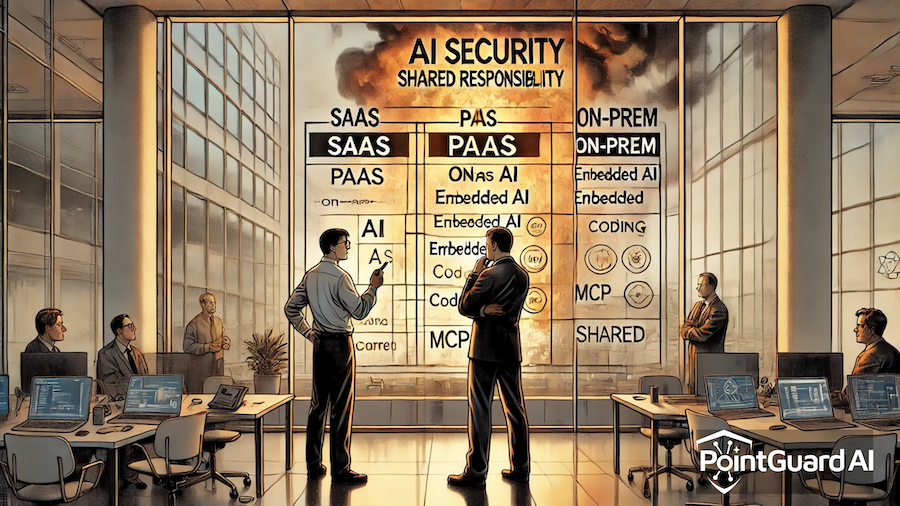

Treating agentic AI as infrastructure requires organizations to rethink how they approach security.

Infrastructure platforms are never deployed without governance, monitoring, and access control mechanisms. Cloud environments, identity systems, and data platforms all include extensive security controls because they sit at the center of enterprise operations.

Agentic AI must be treated the same way.

Organizations deploying AI agents should begin asking several fundamental questions. What AI agents, models, and tools exist across the enterprise environment? What permissions do those agents have when accessing enterprise systems? How are agent activities monitored at runtime? What controls prevent agents from triggering unsafe or unauthorized actions?

These questions are not theoretical. They represent the foundational security controls that make autonomous systems trustworthy.

Without them, enterprises risk creating powerful automation systems that operate outside of governance and oversight.

How PointGuard AI Helps Secure Agentic Systems

As agentic AI becomes embedded across enterprise workflows, organizations need security approaches designed specifically for autonomous systems.

The PointGuard AI Security Platform provides visibility and control across the entire AI lifecycle, from development to runtime operations. The platform enables organizations to discover AI assets across the environment, including models, agents, MCP servers, and related infrastructure. This discovery capability helps security teams understand where AI is deployed and how these systems interact with enterprise applications and data.

At runtime, PointGuard applies contextual security policies that evaluate the behavior and permissions of agents interacting with enterprise systems. These policies incorporate multiple dimensions of context, including the organizational role of the agent, the situational conditions surrounding a request, behavioral patterns over time, and the trust relationship between systems. This contextual analysis enables organizations to enforce adaptive policies that prevent unauthorized access or unsafe actions.

PointGuard also introduces a centralized control point through the PointGuard MCP Security Gateway, which provides zero-trust authorization for AI agents interacting with enterprise tools and APIs. By enforcing identity verification and policy controls at the gateway layer, organizations can ensure that agents only access approved resources and operate within defined guardrails.

Security must also begin earlier in the development lifecycle. PointGuard supports secure-by-design AI development practices, including governed prompt management, secure secrets handling, and policy-based human approvals for high-risk operations. These controls help organizations ensure that agent workflows are secure before they are deployed into production environments.

The Foundation of Trustworthy Agentic AI

Agentic AI has the potential to transform enterprise productivity by automating complex workflows and enabling new forms of human-machine collaboration. However, as these systems become more autonomous and interconnected, they also become more consequential.

IDC notes that agentic AI systems are moving toward the center of business operations, where they may influence mission-critical workflows and decisions. (IDC)

Infrastructure that drives business operations must be reliable, governed, and secure. If agentic AI becomes a foundational layer of enterprise infrastructure, the same principles must apply.

Security is not an optional feature in this environment. It is the foundation that allows organizations to deploy autonomous systems safely, scale AI innovation responsibly, and maintain trust in the digital infrastructure that increasingly runs the enterprise.